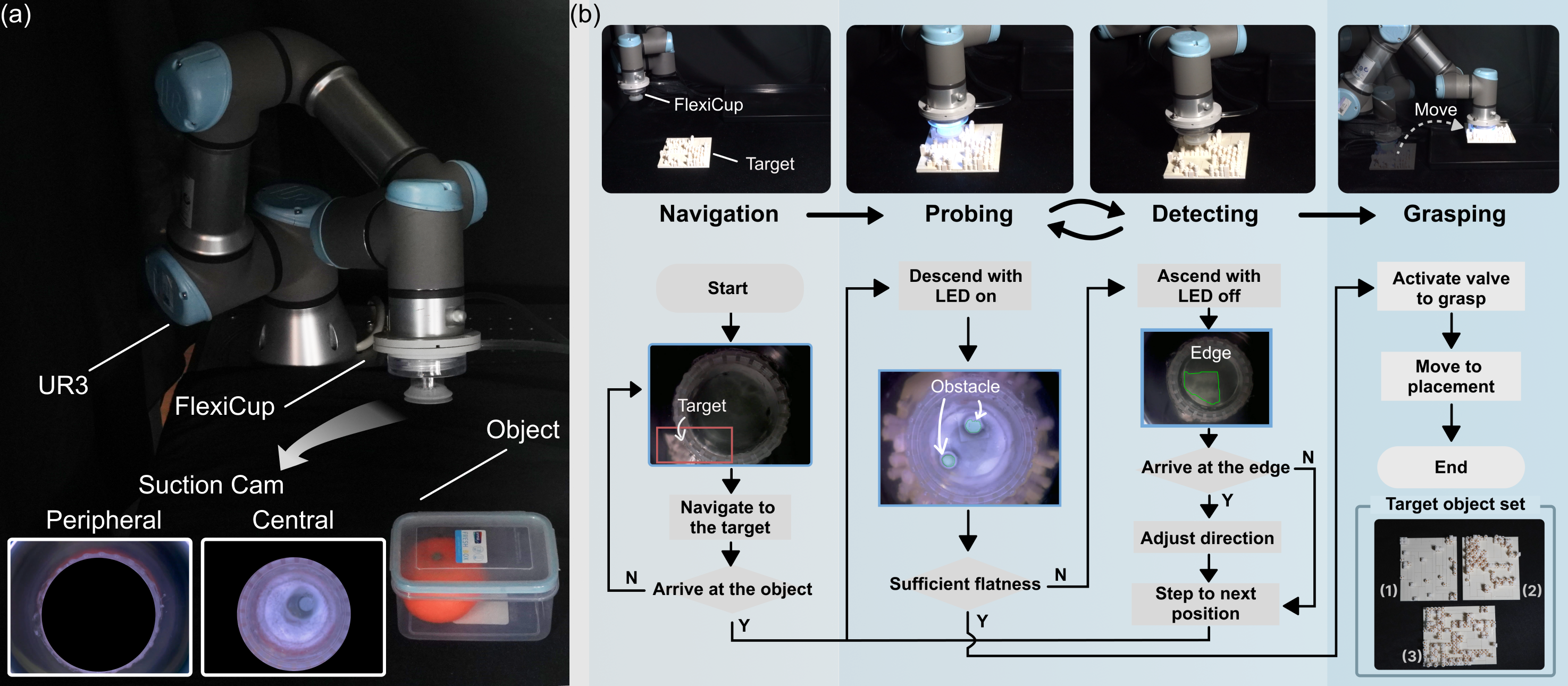

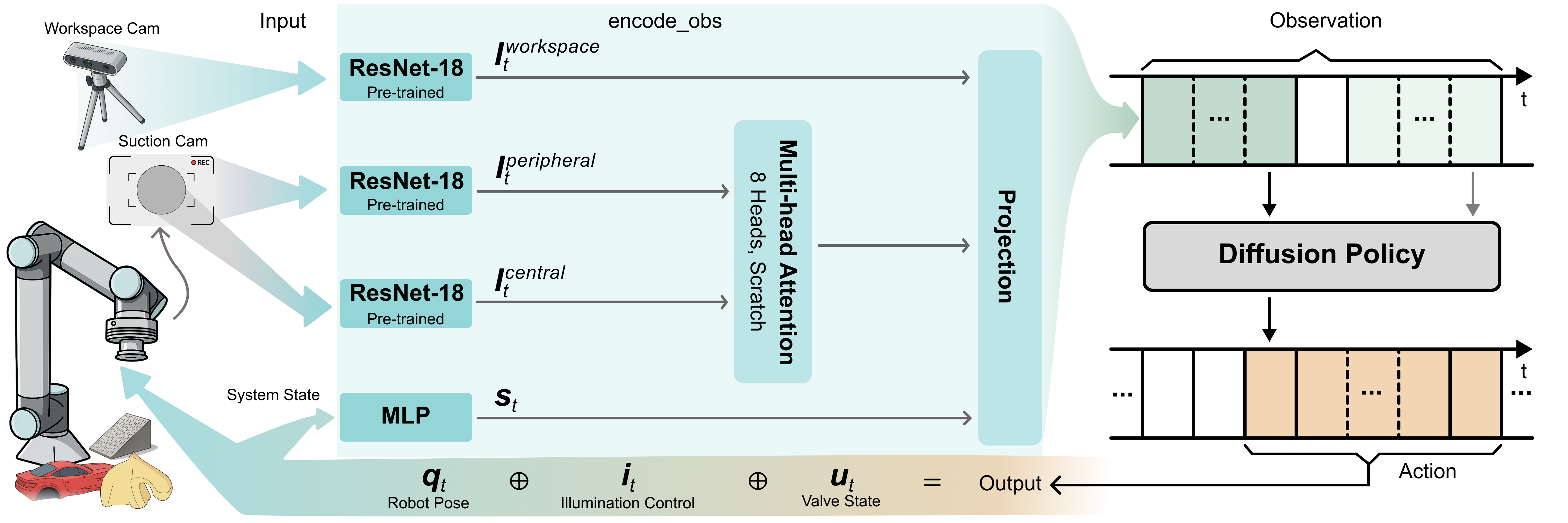

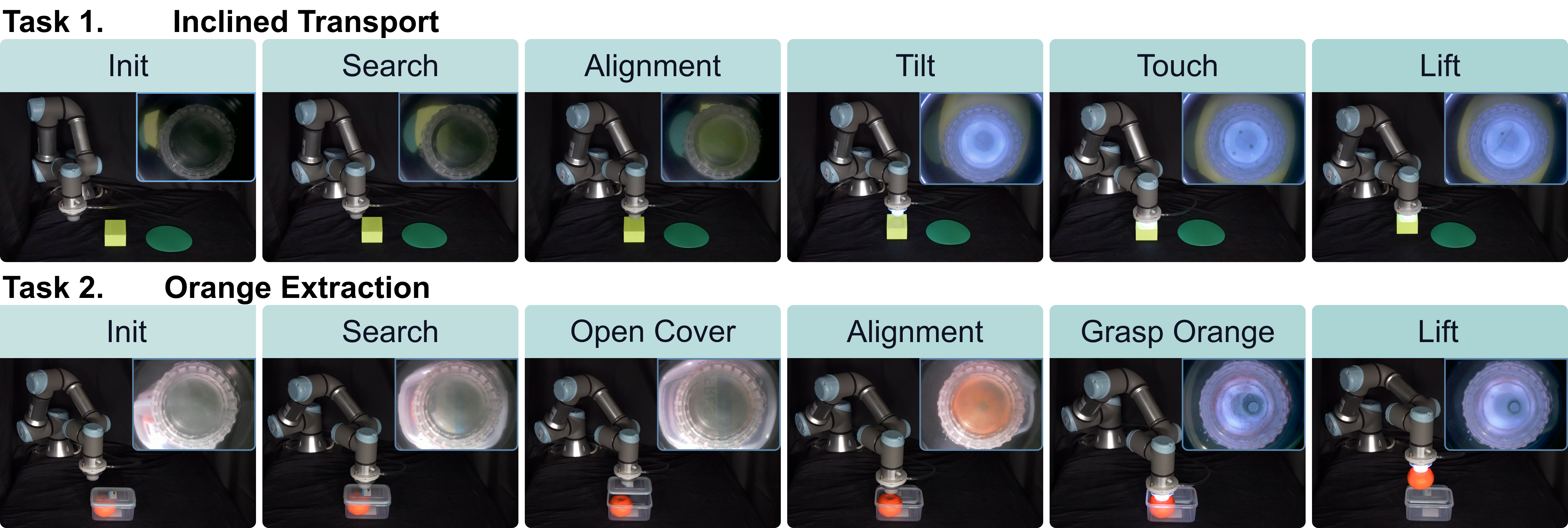

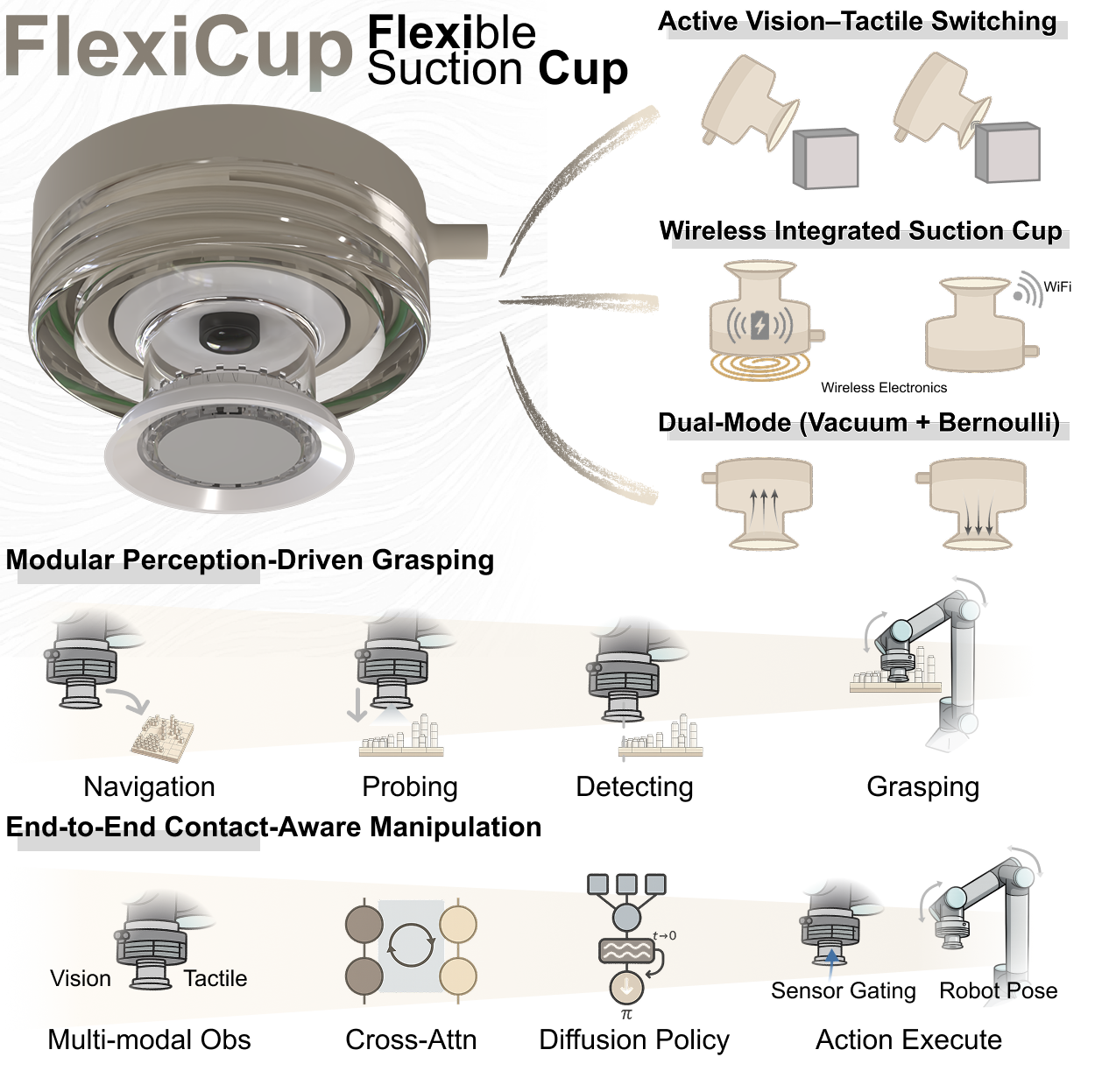

System Overview

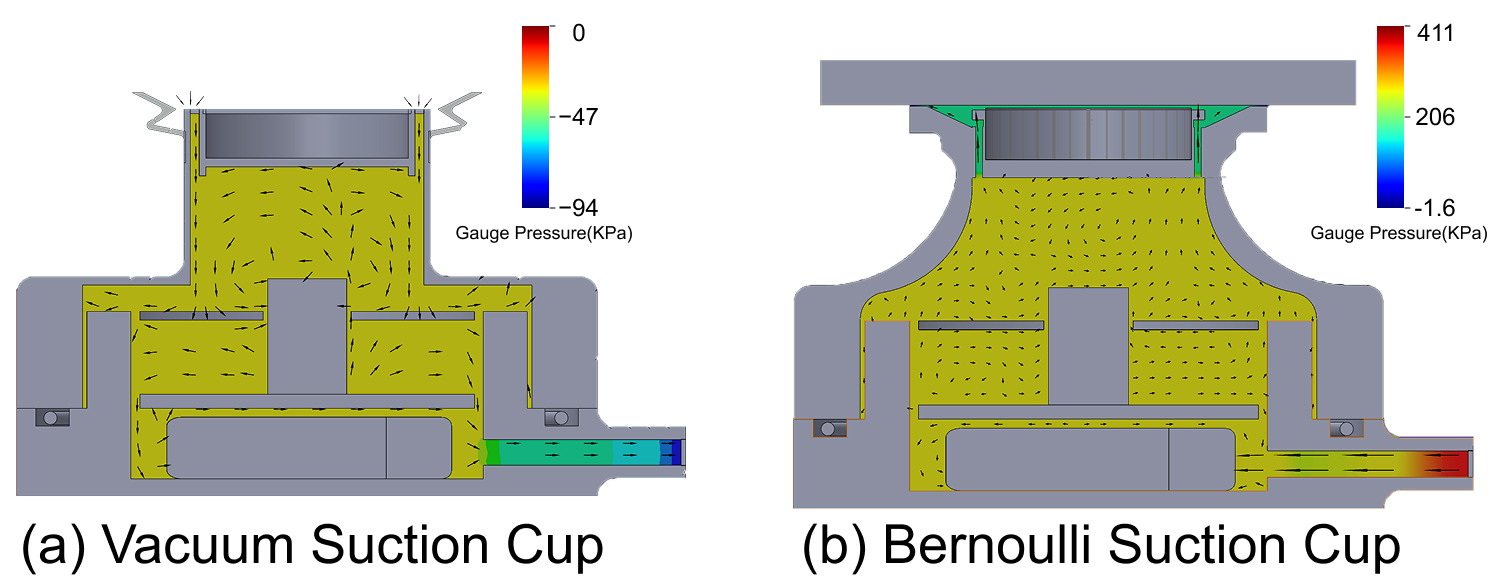

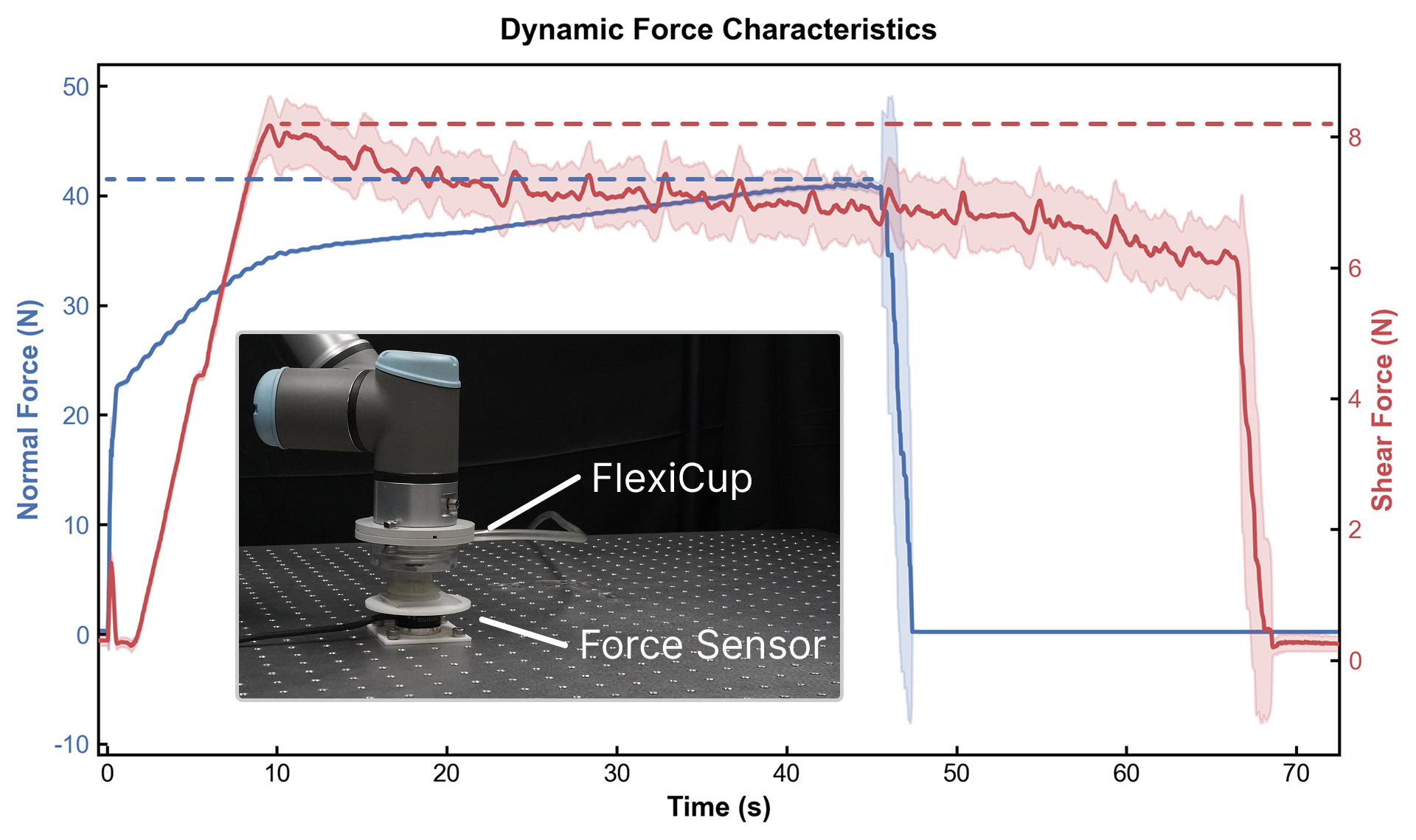

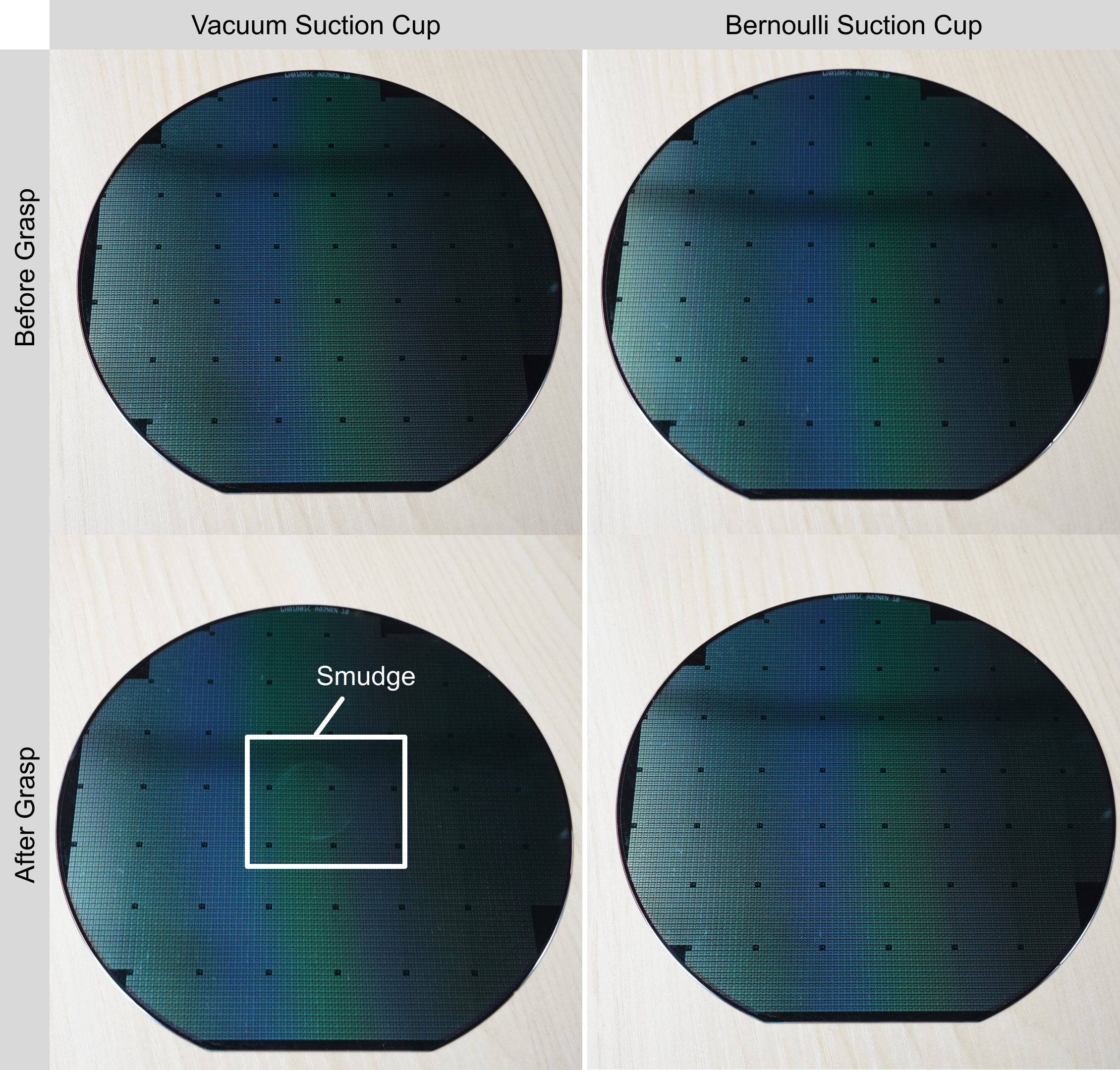

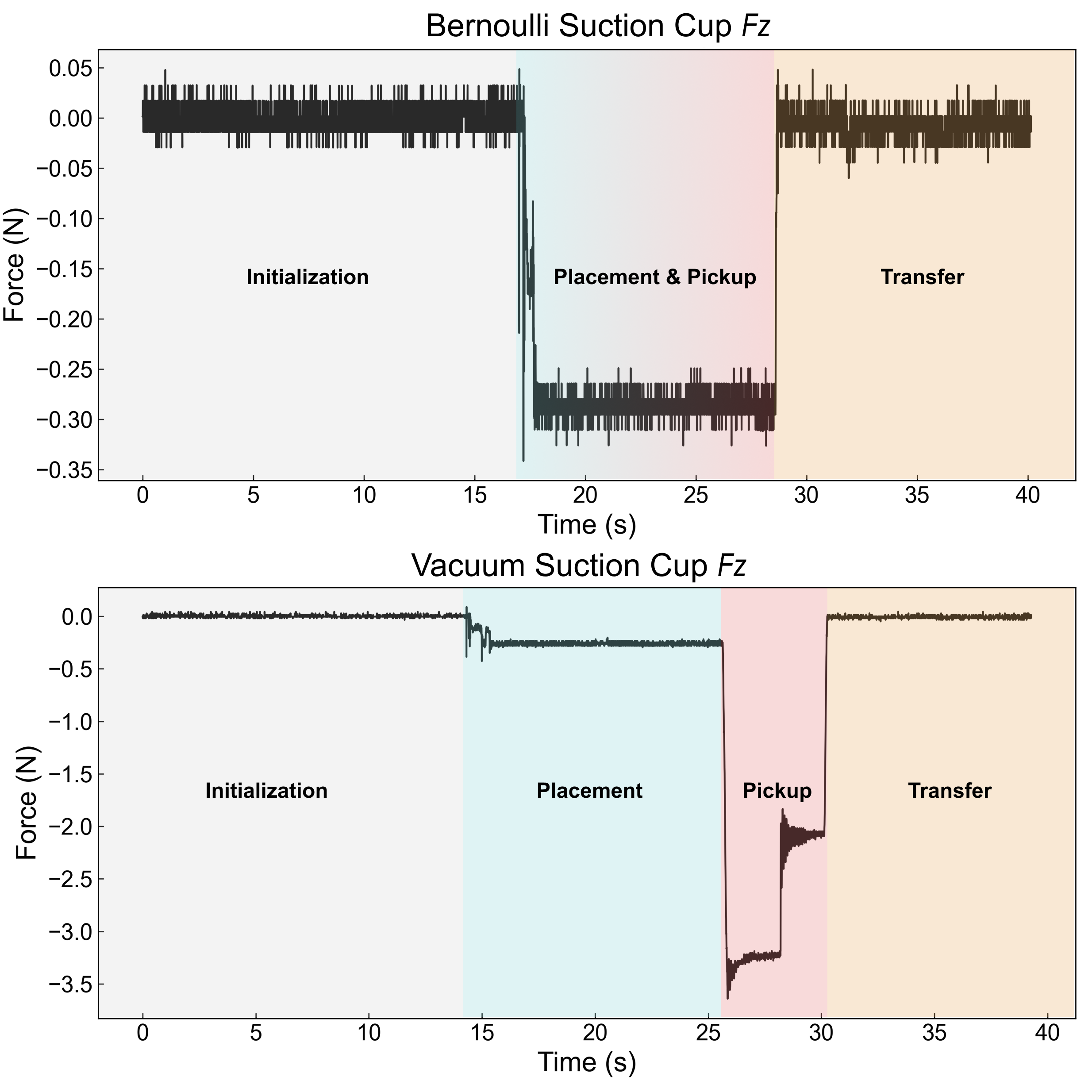

Conventional suction cups lack sensing capabilities for contact-aware manipulation in unstructured environments. FlexiCup is a multimodal suction cup with wireless electronics that integrates dual-zone vision-tactile sensing within a single optical system. The central zone dynamically switches between vision and tactile modalities via LED illumination control, while the peripheral zone provides continuous spatial awareness. The modular mechanical design supports both vacuum (sustained-contact adhesion) and Bernoulli (contactless lifting) actuation while maintaining the identical dual-zone sensing architecture, demonstrating sensing-actuation decoupling where sensing and actuation principles are orthogonally separable. Key specifications include 41.5 N maximum normal force and 640×480@30 Hz image streaming over Wi-Fi.